The Evolution of Artificial Intelligence

From symbolic reasoning to generative models—a journey through decades of innovation.

Key Takeaways

- AI began with symbolic reasoning and rule-based expert systems in the mid-20th century.

- Machine learning shifted focus from hand-coded rules to data-driven pattern recognition.

- Deep learning unlocked breakthroughs in vision, speech, and language through layered neural networks.

- Generative AI—powered by transformers—now creates novel content at scale.

- Real-world adoption spans healthcare, finance, creative industries, and beyond.

- Ethical challenges and technical limitations remain critical areas of ongoing research.

Table of Contents

Early Foundations

The roots of artificial intelligence trace back to the 1950s, when pioneers like Alan Turing and John McCarthy explored whether machines could think. Early systems relied on symbolic logic—encoding human knowledge as explicit rules. Researchers built programs that could prove mathematical theorems or play simple games, demonstrating that computers could manipulate symbols in ways that resembled reasoning.

These foundational efforts established core concepts—search algorithms, knowledge representation, and inference engines—that remain relevant today. Yet the brittleness of hand-coded rules soon became apparent, setting the stage for decades of iterative refinement.

- Turing Test proposed (1950)

- Dartmouth Conference coins “AI” (1956)

- Logic Theorist proves theorems (1956)

- Perceptron algorithm introduced (1958)

- ELIZA chatbot simulates conversation (1966)

- Shakey the Robot navigates environments (1969)

- Prolog logic programming language (1972)

- First AI winter begins (mid-1970s)

Expert Systems & AI Winters

In the 1980s, expert systems promised to capture human expertise in narrow domains—diagnosing diseases, configuring computers, or analyzing financial data. Companies invested heavily, and for a time, AI seemed poised for commercial success. These systems encoded domain knowledge as if-then rules, consulting inference engines to deliver recommendations.

Yet maintaining and scaling rule-based systems proved costly and brittle. When expectations outpaced reality, funding dried up, ushering in the second AI winter. The lesson: symbolic approaches alone couldn’t handle the complexity and ambiguity of real-world problems.

- High maintenance costs for rule updates

- Difficulty capturing tacit human knowledge

- Limited generalization beyond narrow domains

“AI winters taught us that hype without robust methods leads to disillusionment—progress requires patience, data, and iterative learning.”

Machine Learning Era

Machine learning shifted the paradigm from hand-coded rules to data-driven pattern recognition. Instead of programming explicit logic, researchers trained algorithms on examples, letting models discover relationships in data. Techniques like decision trees, support vector machines, and ensemble methods gained traction in the 1990s and 2000s.

This era democratized AI, enabling applications in spam filtering, recommendation engines, and fraud detection. The availability of larger datasets and improved computational resources accelerated progress, laying the groundwork for the deep learning revolution to come.

- Supervised learning from labeled examples

- Unsupervised clustering and dimensionality reduction

- Reinforcement learning for sequential decision-making

Deep Learning

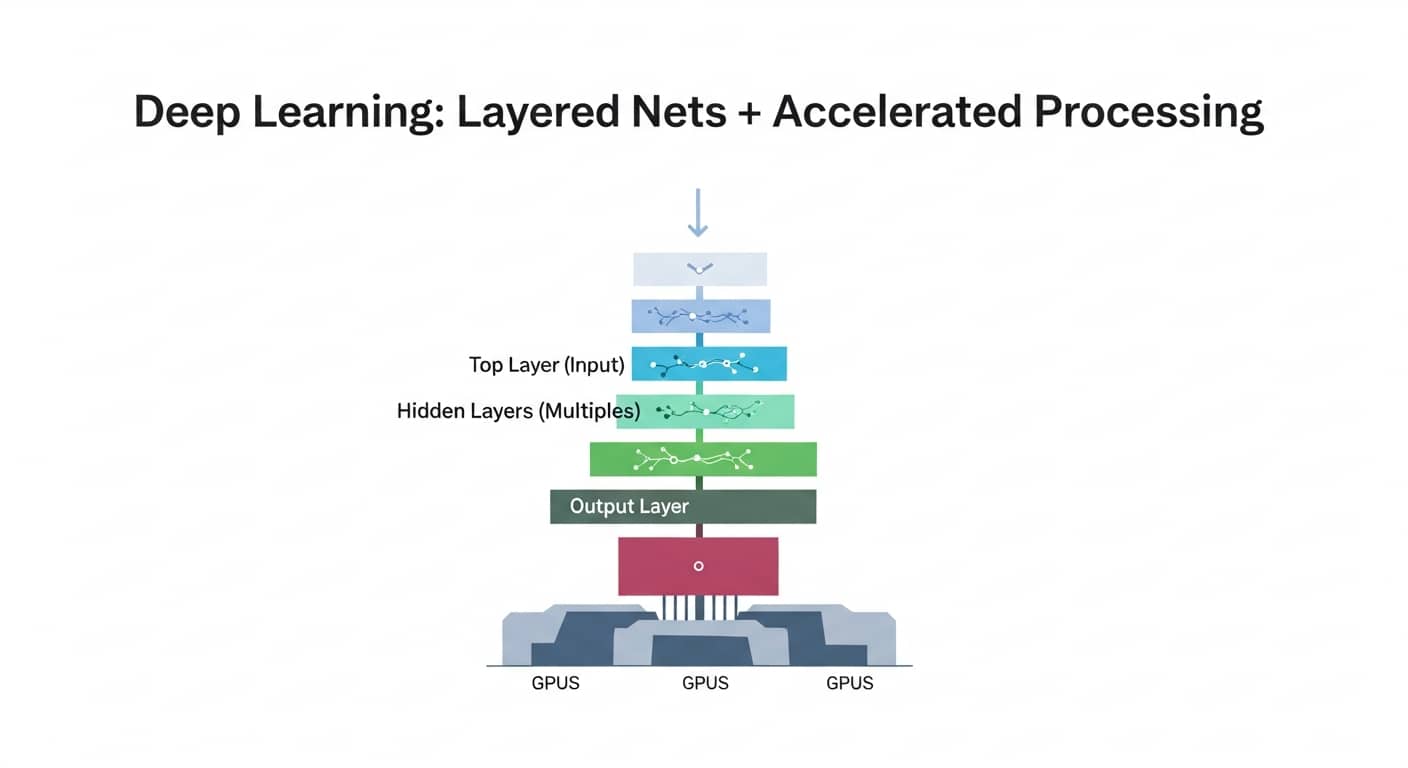

Deep learning—neural networks with many layers—unlocked breakthroughs in computer vision, speech recognition, and natural language processing. Powered by GPUs and vast datasets, models like convolutional and recurrent networks achieved human-level performance on tasks once thought intractable. ImageNet competitions and speech benchmarks showcased rapid gains.

This era transformed industries—autonomous vehicles, medical imaging, voice assistants—and set the stage for generative models. Deep learning’s ability to learn hierarchical representations from raw data proved transformative, though it also introduced new challenges around interpretability and data requirements.

Caption: Layered neural networks process data hierarchically, leveraging GPU acceleration for training at scale.

- Convolutional networks for image recognition

- Recurrent networks for sequential data

- Transfer learning and pre-trained models

“Generative models don’t just classify—they create, opening doors to automation and creativity while demanding vigilance around misuse.”

Real-World Impact

AI now powers applications across healthcare (diagnostic imaging, drug discovery), finance (fraud detection, algorithmic trading), creative industries (content generation, design tools), and beyond. Organizations deploy models to automate routine tasks, personalize experiences, and uncover insights from vast datasets.

Yet adoption varies—some sectors embrace AI rapidly, while others proceed cautiously due to regulatory, ethical, or technical constraints. Success stories highlight efficiency gains and innovation, while failures underscore the importance of domain expertise and human oversight.

- Healthcare: faster diagnoses, personalized treatment plans

- Finance: risk modeling, automated compliance checks

- Creative: content drafting, design prototyping, music composition

Limitations & Risks

Despite progress, AI systems remain brittle, opaque, and prone to bias. Models trained on flawed data perpetuate stereotypes. Adversarial inputs can fool classifiers. Generative models hallucinate facts or produce harmful content. These limitations demand rigorous testing, diverse datasets, and ongoing monitoring.

Ethical concerns—privacy, accountability, job displacement—require multidisciplinary collaboration. Policymakers, researchers, and practitioners must balance innovation with safeguards, ensuring AI serves society responsibly.

- Bias amplification from training data

- Lack of interpretability in deep models

- Vulnerability to adversarial attacks and misuse

“Responsible AI isn’t optional—it’s the foundation for trust, adoption, and long-term value.”

Future Outlook

The next wave of AI will likely emphasize efficiency, interpretability, and multimodal reasoning. Smaller, specialized models may complement large generalists. Advances in reinforcement learning from human feedback and constitutional AI aim to align systems with human values. Regulatory frameworks will mature, shaping how AI is developed and deployed.

Collaboration between academia, industry, and civil society will be critical. As AI becomes more capable, the focus must shift from raw performance to robustness, fairness, and societal benefit—ensuring technology amplifies human potential rather than replacing it.

- Efficient models for edge deployment

- Explainable AI for high-stakes decisions

- Human-AI collaboration frameworks

Conclusion

The evolution of artificial intelligence—from symbolic reasoning to generative models—reflects decades of experimentation, setbacks, and breakthroughs. Each era contributed insights that shape today’s landscape, reminding us that progress is iterative and context-dependent. Understanding this history helps us navigate current capabilities and limitations with clarity.

As you explore AI for your own projects, start small: pick one workflow, pilot with human review, and scale thoughtfully. Responsible adoption balances innovation with oversight, ensuring technology amplifies human expertise rather than replacing it. The journey continues—stay curious, critical, and collaborative.